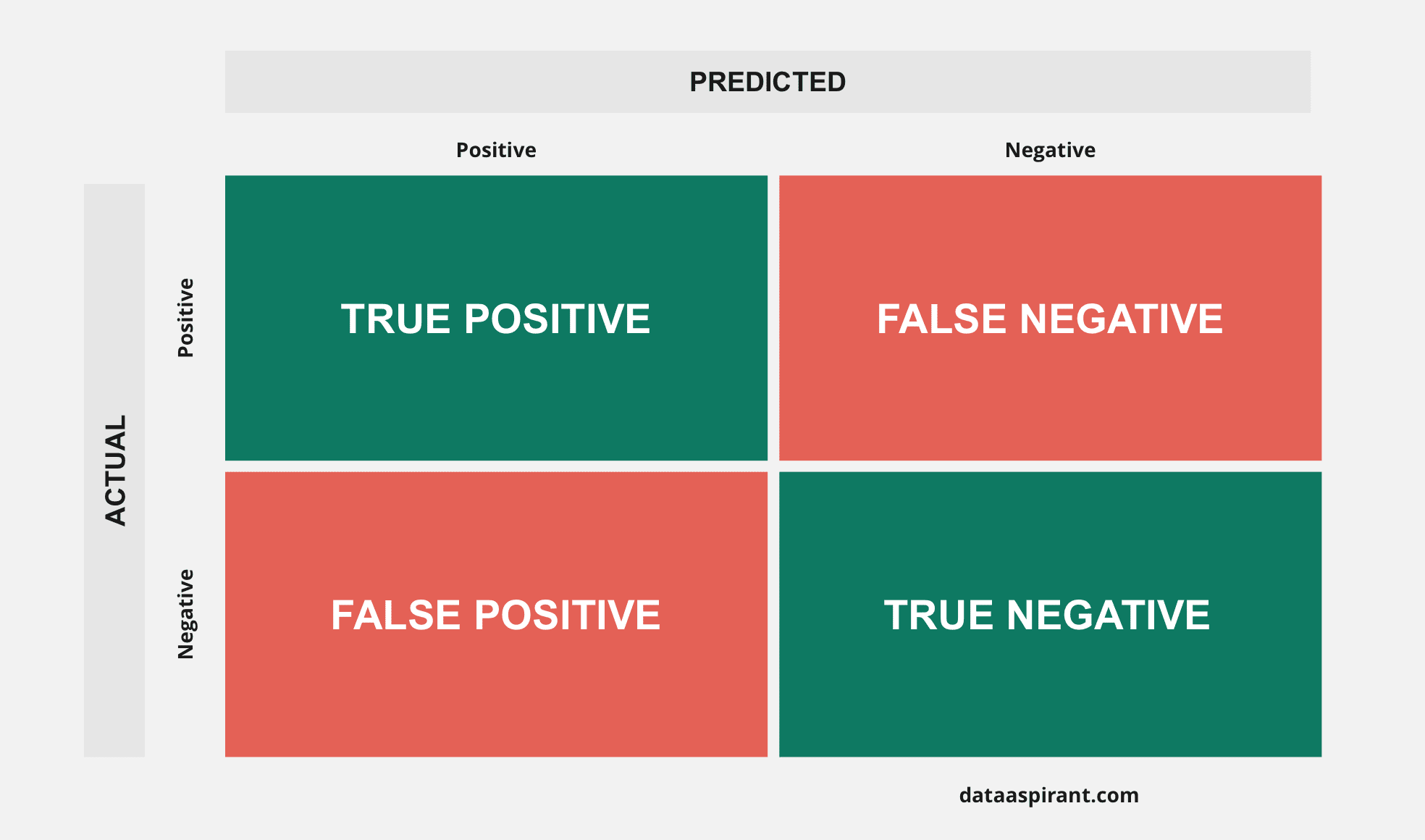

F1 score is even more unreliable in such cases, and here would yield over 97. The overall accuracy would be 95%, but in more detail the classifier would have a 100% recognition rate ( sensitivity) for the cancer class but a 0% recognition rate for the non-cancer class. Accuracy will yield misleading results if the data set is unbalanced that is, when the numbers of observations in different classes vary greatly.įor example, if there were 95 cancer samples and only 5 non-cancer samples in the data, a particular classifier might classify all the observations as having cancer. This allows more detailed analysis than simply observing the proportion of correct classifications (accuracy). In predictive analytics, a table of confusion (sometimes also called a confusion matrix) is a table with two rows and two columns that reports the number of true positives, false negatives, false positives, and true negatives. Sensitivity, recall, hit rate, or true positive rate (TPR) T P R = T P P = T P T P + F N = 1 − F N R. True positive (TP) A test result that correctly indicates the presence of a condition or characteristic true negative (TN) A test result that correctly indicates the absence of a condition or characteristic false positive (FP) A test result which wrongly indicates that a particular condition or attribute is present false negative (FN) A test result which wrongly indicates that a particular condition or attribute is absent This is how you can derive conclusions from a confusion matrix.Table layout for visualizing performance also called an error matrix Terminology and derivationsįrom a confusion matrix condition positive (P) the number of real positive cases in the data condition negative (N) the number of real negative cases in the data Let us calculate the above-mentioned measures for our confusion matrix.Īccuracy = (TP + TN) / (TP+TN+PF+FN) = (100+35)/150 = 0.9Įrror Rate = (FP + FN) / (TP+TN+PF+FN) = (5+10)/150 = 0.1 True positives divided by actual YESį beta score -> ((1+beta2) * Precision * Recall) / (beta2 * Precision + Recall) (0.5, 1 and 2 are common values of beta)

True Negative Rate (TNR) -> It is the measure of, how often does the classifier predicts NO when it is actually NO. True Positive Rate or Recall (TPR) -> It is the measure of, how often does the classifier predicts YES when it is actually YES.įalse Positive Rate (FPR) -> It is the measure of, how often does the classifier predicts YES when it is NO. True Negative (TN) -> Observations that were predicted NO and were actually NO.įalse Positive (FP) -> Observations that were predicted YES but were actually NO.įalse Negative (FN) -> Observations that were predicted NO but were actually YES.Īccuracy -> It is the measure of how correctly was the classifier able to predict.Įrror Rate -> It is the measure of how incorrect was the classifier. True Positive (TP) -> Observations that were predicted YES and were actually YES. However, in actuality, 110 people tested positive and 40 tested negative. The classifier predicted 105 people to have tested positive and the rest 45 as negative. Therefore, there are two predicted classes – YES and NO.ġ50 people were tested for the disease. Let us assume that YES stands for person testing positive for a disease and NO stands for a person not testing positive for the disease. By using a confusion matrix, you can focus your. It is a confusion matrix for binary classification. A confusion matrix allows you to see the labels that your model confuses with other labels in your model. Consider the confusion matrix given below. A confusion matrix is fairly simple to understand but let us get acquainted with a few terminologies first.

A confusion matrix is a table that shows how well a classification model performs on the test data.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed